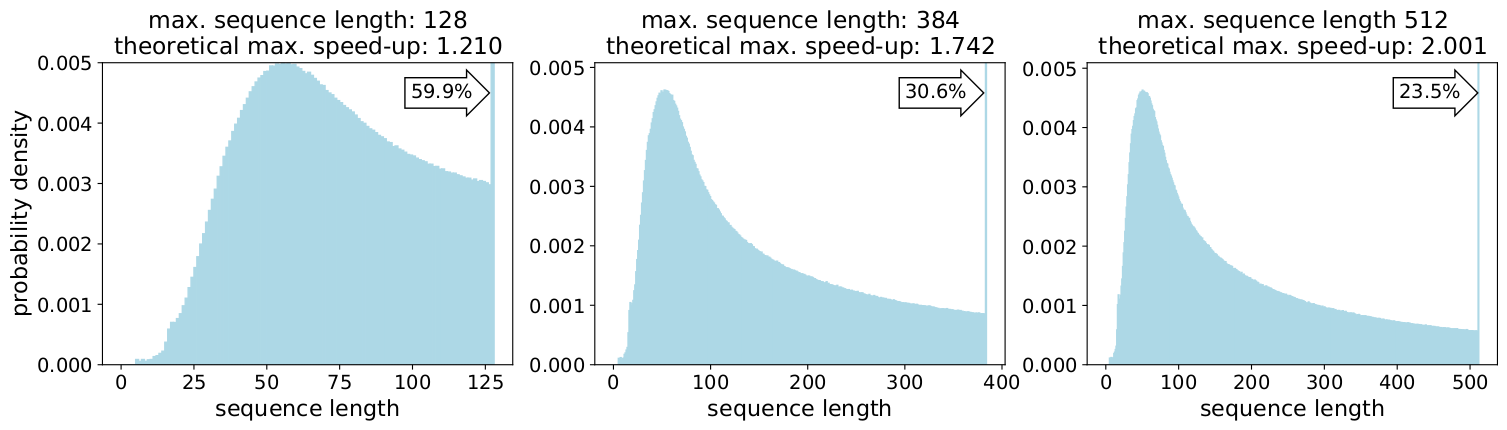

Results of BERT4TC-S with different sequence lengths on AGnews and DBPedia. | Download Scientific Diagram

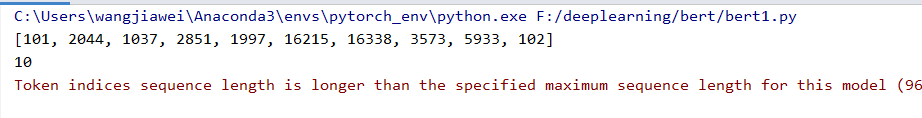

deep learning - Why do BERT classification do worse with longer sequence length? - Data Science Stack Exchange

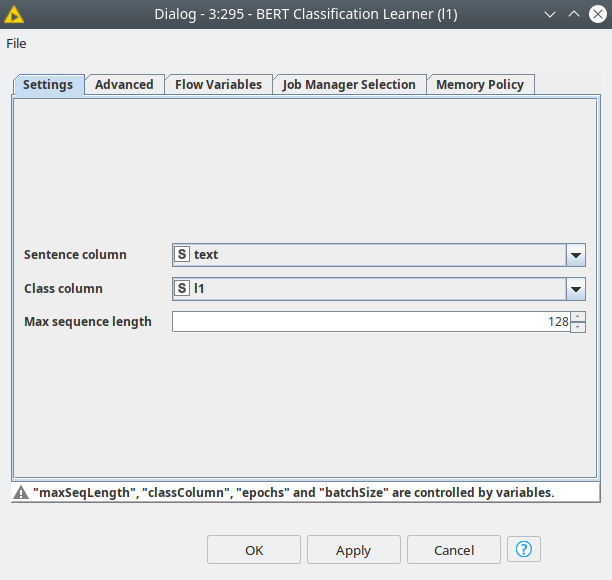

Sentiment Analysis with BERT and Transformers by Hugging Face using PyTorch and Python | Curiousily - Hacker's Guide to Machine Learning

Hugging Face on Twitter: "🛠The tokenizers now have a simple and backward compatible API with simple access to the most common use-cases: - no truncation and no padding - truncating to the

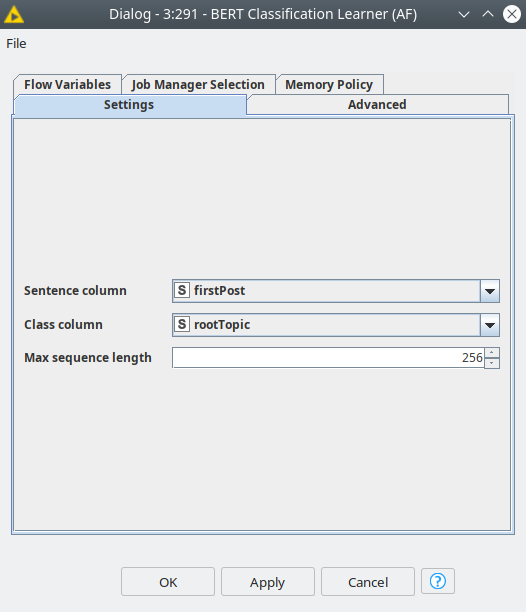

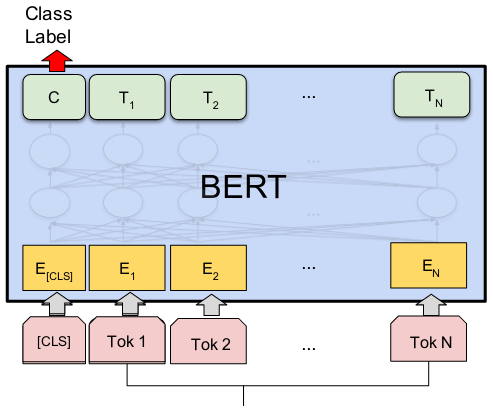

3: A visualisation of how inputs are passed through BERT with overlap... | Download Scientific Diagram